zk Proofs and AI for Fraud-Proof Web3 Bounty Task Verification

In the high-stakes arena of Web3 bounties, where developers hunt vulnerabilities and innovators chase rewards, fraud lurks like a shadow. Fake submissions flood platforms, verification drags on for weeks, and trust erodes as AI-generated noise drowns out genuine work. Traditional bug bounty programs, once the gold standard for securing decentralized projects, now buckle under these pressures. Enter zero-knowledge proofs paired with artificial intelligence: a duo poised to forge fraud-proof bounty platforms that preserve privacy while delivering ironclad verification.

This convergence isn’t hype; it’s a fundamental shift. Platforms like zkverifiedtasks. com are already pioneering AI task verification in Web3, using ZK proofs to confirm task completion without exposing methods or data. Imagine a bounty hunter proving they cracked a smart contract exploit, all while keeping their clever workaround secret. No more disputes, no leaked IP, just pure, verifiable truth.

Unraveling the Chaos of Current Bounty Ecosystems

Web3 bounty programs face existential threats. Forbes highlights how AI noise swamps submissions with low-effort fakes, verification delays stretch into months, and researcher distrust festers from opaque processes. Immunefi’s zkVerify bug bounties underscore the pain: modular networks offload heavy proof verification to cut costs, yet core issues persist. LinkedIn voices like John A. warn that even zk proofs don’t auto-fix authentication gaps, as seen in zkLogin analyses.

Core Web3 Bounty Challenges

-

Fraudulent submissions via AI spam flood programs, as highlighted in Forbes on bug bounty struggles.

-

Slow manual verification causes delays, exacerbating issues in high-volume Web3 tasks per industry analyses.

-

Privacy risks expose bug hunters’ strategies, undermining participation as noted in zkVerify discussions.

-

Distrust between hunters and projects erodes collaboration, a key pain point in platforms like Immunefi.

-

Scalability limits hinder high-volume programs, addressed by innovations like zkVerify mainnet.

These aren’t isolated gripes; they’re systemic failures. HackenProof’s crowdsourced audits for crypto projects reveal vulnerabilities pre-exploit, but scaling to thousands of tasks demands more. Without intervention, zero knowledge proofs for bounties remain underutilized, leaving billions in potential exploits unprotected.

Zero-Knowledge Proofs as the Ultimate Verifier

At its core, a zero-knowledge proof lets you prove a statement’s truth without revealing underlying details. In zk proofs Web3 bounties, this means attesting to a task’s completion – say, finding a reentrancy bug – via a succinct proof, verifiable on-chain in milliseconds. zkVerify’s mainnet launch changes the game: a dedicated blockchain for proof verification across Groth16, UltraPlonk, and RISC Zero systems. It slashes costs for projects, enabling even small DAOs to run secure bounties.

Privacy-preserving by design, ZK sidesteps the pitfalls of public disclosures. Bounty hunters submit proofs instead of full reports, shielding proprietary techniques from copycats. This builds empires on fundamentals, as on-chain data confirms legitimacy while market trends reward early adopters. Deutsche Bank’s take on ZK in blockchain finance echoes this: verifiable credentials automate KYC/AML without data leaks, a blueprint for bounties.

AI Amplifies ZK for Intelligent, Fraud-Resistant Analysis

ZK alone is powerful, but AI turbocharges it into a verification powerhouse. zkVerify for AI tackles verifiable machine learning, proving model inference without exposing training data. In bounties, AI scans submissions pre-ZK, filtering spam and flagging anomalies with pattern recognition honed on vast datasets.

Picture this: an AI agent analyzes code diffs for exploit patterns, generates a preliminary score, then bundles it into a ZK proof for final on-chain validation. CoinDesk notes AI agents crave ZK identities for trustless interactions; bounties extend this to human hunters. zkSecurity’s bug hunts probe if AI can unearth ZK circuit flaws, hinting at self-improving systems where AI verifies AI.

ZKML bridges AI/ML and Web3, per HackMD, unlocking privacy-preserving Web3 tasks. Medium’s zkVerify intro stresses compliance: verify identities or transactions sans sensitive reveals. Together, they craft fraud-proof workflows, where only legitimate AI task verification Web3 triumphs.

Platforms like zkverifiedtasks. com embody this synergy, streamlining fraud proof bounty platforms where AI triages submissions and ZK seals the deal. Their approach integrates on-chain metrics with AI-driven sentiment analysis, mirroring how I evaluate tokenomics: adoption curves validated by immutable proofs, not promises.

On submission, the platform’s AI oracle preprocesses: anomaly detection flags deepfake code, natural language processing vets report clarity. Passing proofs hit the chain via zkVerify’s aggregator, verifying in under 10 seconds across systems like UltraPlonk. Rewards auto-dispense if criteria match; disputes evaporate as math doesn’t lie. This isn’t incremental; it’s exponential scalability for privacy preserving Web3 tasks, handling 10x volume without human overhead.

zkSecurity’s experiments with AI bug hunting in ZK circuits add another layer. Can neural nets spot flaws in Groth16 setups? Early results suggest yes, with AI proposing circuits that self-audit via recursive proofs. Pair this with zkVerify’s AI use cases – proving LoRA adaptations without model weights – and bounties evolve into proactive defense nets, preempting exploits before bounties post.

Overcoming Hurdles: From Theory to Empire-Building

Skeptics point to compute costs and UX friction, valid concerns in nascent tech. Yet zkVerify’s mainnet counters this: off-chain aggregation batches proofs, slashing gas by 90% versus Ethereum-native verification. Adoption metrics tell the tale; projects like modular rollups already integrate, per Immunefi bounties. My lens on fundamentals sees parallels to early ERC-20 days: clunky at launch, dominant post-refinement.

Regulatory tailwinds amplify momentum. Zero-knowledge proofs enable compliant bounties, verifying hunter identities or task impacts sans PII dumps, as Medium analyses note. In a post-FTX world, where trust deficits cost billions, zero knowledge proofs bounties fortify decentralized finance against both hackers and watchdogs. AI’s role? Bias-mitigated oracles ensure fair scoring, with ZK attesting to model fidelity.

Challenges persist: interoperability across proof systems demands standards bodies like ZKProof. org to convene. AI hallucination risks? Mitigate via hybrid human-AI juries for edge cases, ZK-wrapped. But the trajectory is clear; platforms ignoring this stack risk obsolescence, while pioneers capture network effects.

The Horizon: Web3 Bounties Reimagined

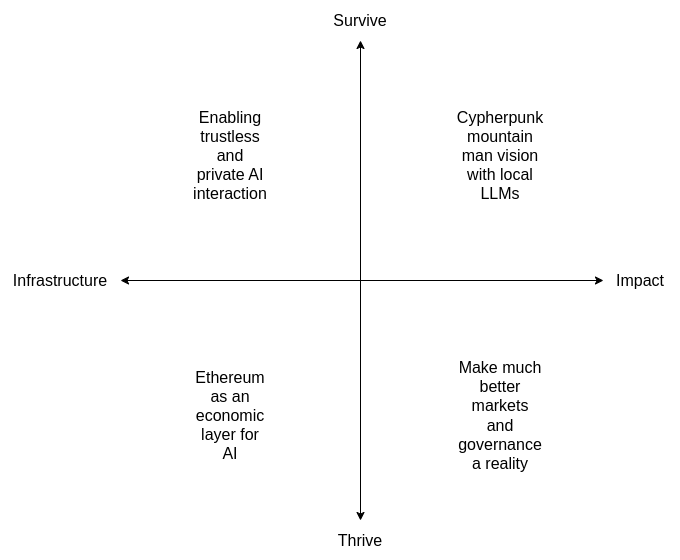

Envision autonomous bounty markets: AI agents propose tasks based on on-chain risk signals, hunters compete via ZK bids, verification settles atomically. CoinDesk’s AI agent identity thesis fits seamlessly; ZK credentials let bots hunt solo, earning tokens that compound into DAOs. HackMD’s ZKML vision bridges to this, where models train on bounty data under differential privacy, spawning smarter verifiers.

For developers and projects, the ROI is stark: reduced exploit losses, faster iteration, talent magnetized by fair pay. Bounty hunters gain leverage; skills compound privately, reputations accrue on-chain. Web3’s empire-builders will prioritize these tools, as fundamentals – verifiable work over vaporware – dictate survival. zkverifiedtasks. com leads, but the protocol wars loom: open-source ZK-AI stacks could standardize, birthing a verification layer rivaling Layer 1s.

This fusion doesn’t just fix bounties; it redefines decentralized collaboration. Privacy intact, fraud exiled, innovation unleashed – the next cycle’s security scaffold stands ready.